Deploying the model

You can use an OpenShift AI model server to deploy the model as an API.

-

You have saved the model as described in Preparing the model for deployment.

-

You have installed KServe and enabled the model serving platform.

-

You have enabled a preinstalled or custom model-serving runtime.

-

You have obtained values for the following S4 storage parameters:

-

Access Key

-

Secret Key

-

Endpoint

-

Region

-

Bucket

To obtain these values, navigate to your project’s Connections tab. For the MyStorage connection, click the action menu (⋮) and then click Edit.

-

-

In the OpenShift AI dashboard, navigate to the project details page and click the Deployments tab.

-

Click Deploy model.

The Deploy a model wizard opens.

-

In the Model details section, provide information about the model:

-

For Model location, select Existing connection and then select My Storage.

-

Enter the following values from your S4 storage connection:

-

Access Key

-

Secret Key

-

Endpoint

-

Region

-

Bucket

-

-

For Path, enter

models/fraud. -

For Model type, select Predictive model.

-

Click Next.

-

-

In the Model deployment section, configure the deployment:

-

For Model deployment name, enter

fraud. -

For Description, enter a description of your deployment.

-

For the hardware profile, keep the default value.

-

For Model framework (name - version), select

onnx-1. -

For the Serving runtime field, accept the auto-selected runtime,

OpenVINO Model Server. -

Click Next.

-

-

In the Advanced settings section, accept the defaults by clicking Next.

-

In the Review section, click Deploy model.

-

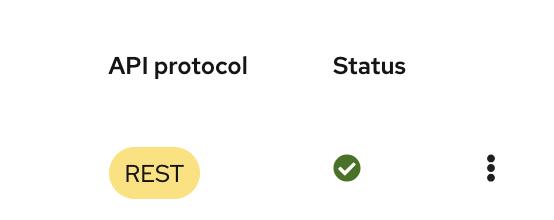

Confirm that the deployed model is shown on the Deployments tab for the project, and on the Deployments page of the dashboard with a Started status.